Light on Glass

Why do you start making a game engine?

Introductions

In my last post I wrote about how Retro Game Engine started. It was not some grand “engine initiative,” but as a stubborn little D3D12 + Win32 prototype that slowly turned into a full pipeline. This post is the other half of that story. We covered the “how”. Now I want to dive into the “why”.

If I’m being honest, I didn’t build RetroEngine out of a desire to reinvent the wheel. I built it because, once I reconnected with a real CRT, I couldn’t u nsee what modern engines were doing to the image…not out of incompetence, but out of design.

Modern engines are incredible. I’ve used Unreal and Unity for close to a decade. I love them. This isn’t a dunk piece meant to say my engine is any better or even close to those. I’m not insane or naïve. Those engines are like a multi-purpose tool. You can do so much with them. Retro Game Engine is more like a Philips screwdriver. Its a tool with one purpose. At then end of this though, if I succeed, it will do its job better than any multi-purpose tool could.

The Moment the Filter Broke

Lets set the stage, so to speak. The spark that ignited this entire endeavor wasn’t some recent binge where I was obsessively tuning scanline sliders in unreal at 2am (it would be fair to think that was the case). Nah this wasn’t present momentum leading to a trigger point…it was hindsight.

For years I had seen CRT filters, built advanced post-process materials, and watched other people do the same. They looked cool, they scratched the itch in a way. They always seemed good enough when I compared them against the mental image from my childhood memories. I assumed any gap was pure nostalgia, not anything tangible.

The day that changed was the day I dragged home a $35 Sharp CRT from 1998, plugged in an old console (SNES), and actually started playing. I was immediately hit by a wave of experiences that are hard to articulate. In short, it was magic.

Motion didn’t look like a clean sequence of frozen frames played in rapid repetition. Bright objects didn’t just glow, they behaved like a physical light under glass. Pixels weren’t “pixels” in the way modern displays train your brain to expect. That is the part that really jumped out and stuck with me. The part that turned into an itch in my brain. Not “visual fidelity”. We are all aware modern displays are sharper. The thing that annoyed me was what came downstream of the old limitations.

Those limitations were real, physical ones. They didn’t just shape the hardware, they shaped the art direction. Games had to collaborate with the player’s imagination. They had to be bold with clean silhouettes and strong color choices. They had to have distinct worlds with less noise and more identity.

There’s a quote that keeps floating around in my head as I get older: “The enemy of art is the absence of limitations.” Its one of those lines that feels more true the longer time has gone on and I’ve worked in Unreal and Unity. So when I saw the real CRT again, this wasn’t an “oh that looks nicer” moment, it was a “we lost an entire relationship between hardware, art, and the player” moment.

So after that, the filters I’d seen started to feel flat when I revisited them. Like a mask glued on top of a finished image. And that is when my “why” turned into a technical exploration.

A CRT Isn’t a Camera Problem

The problem isn’t that Unreal or Unity can’t do cool things, they obviously can. They are straight-up beasts. The problem is that modern engines are all built around a particular idea of what rendering is:

A virtual camera looks at a 3D world, the engine renders the frame, then you apply post-processing to stylize or correct it, then present it.

Scene → Camera → Framebuffer → Post → Present Final Output

Unity even describes post-processing in terms of camera/film properties. That isn’t an insult, it’s literally how the feature is positioned.

Unreal is similar, you can make post-process material graphs and then apply them at the end of the render pipeline, after tonemapping and TAA. You are working with the final LDR color, not the raw scene lighting.

The camera metaphor is perfect for the goal of modern engines like these. They aim to render huge worlds, with realistic lighting, animate them, and make them stable at 60FPS while a player does unpredictable things.

But a CRT isn’t a camera filming the world. Its a physical device that generates an image as an output of physical process. It does this by doing the following:

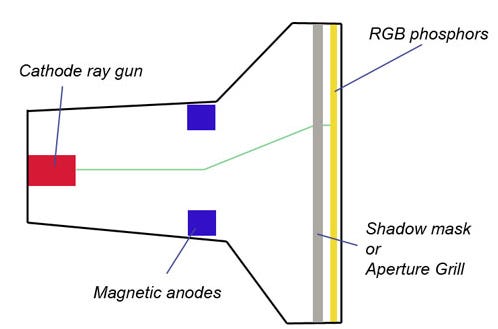

An electron beam scans across the screen over time, typically from top to bottom and left to right.

Phosphors sitting on the screen get excited by the beam and emit light.

As the phosphors lose excitement over time, we perceive a decay to this process.

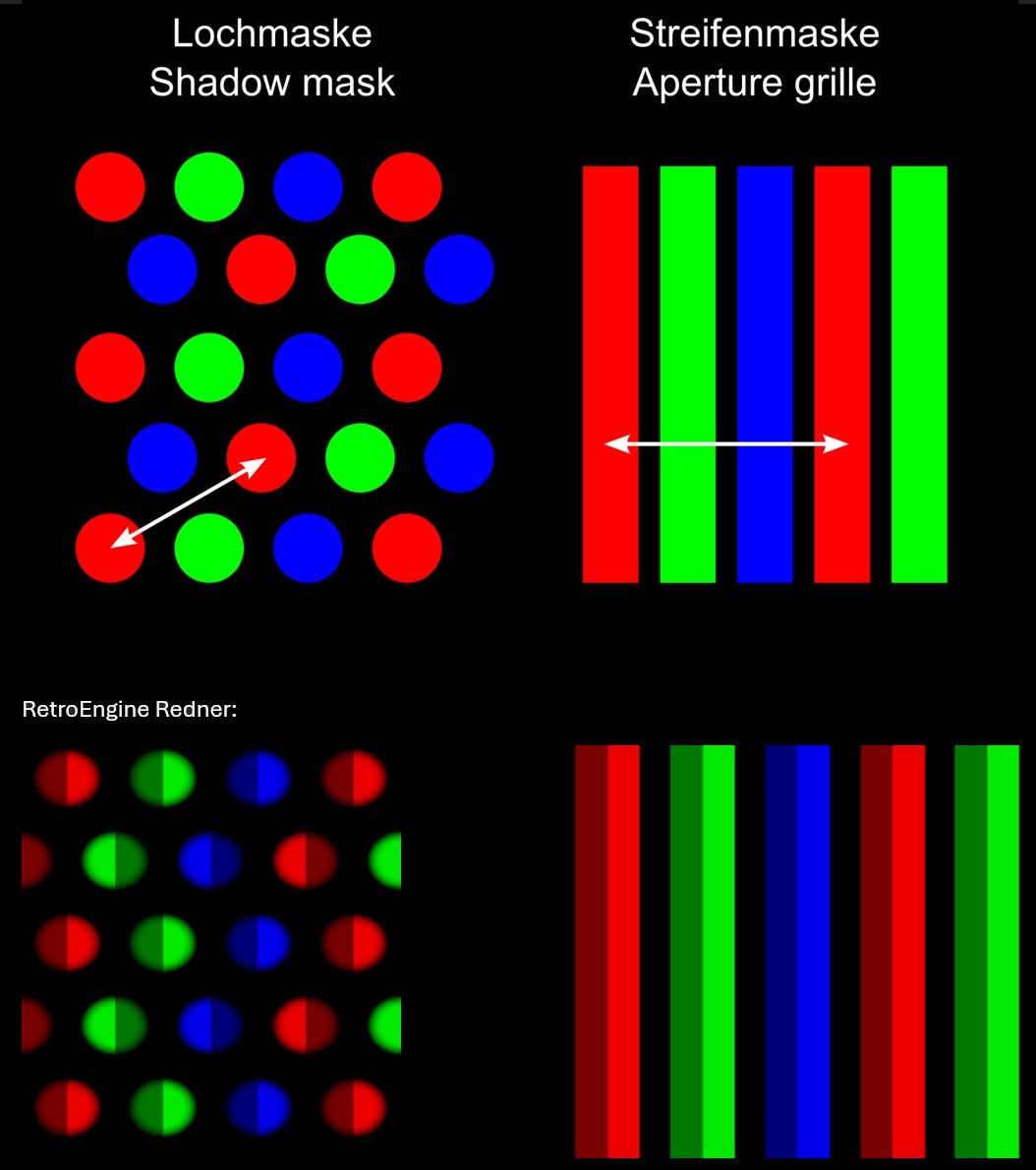

The mask geometry (shadow, aperture, etc.) affects how light lands and mixes.

The frame is never a single instant, its a culmination of integrations over time.

That’s not a post-process overlay or filter effect, its an entirely different mental model of what it means to draw or render an image. I think this is why I struggled when trying to bolt this onto a modern engine. The foundations between the two models is just so fundamentally different. At this point, I was already beginning to consider my options. I was half inclined to give up.

By this time though, I found I was probably playing almost entirely on the CRT. Discovering old consoles and games I had never played as a 90s kid, and thus had no real attachment or nostalgia for them. This again brought me back to my earlier statement. We lost something. I felt compelled to consider how I could still achieve my goal.

Uphill Battle

I went back to unreal. As stated before, post-processing was after tone mapping, color grading, anti aliasing. I would have to start bending the engine too much to get earlier in the pipeline. I started doing research. I’m not a career graphics researcher. I’m learning this in public. If I get terminology slightly wrong, that’s on me.

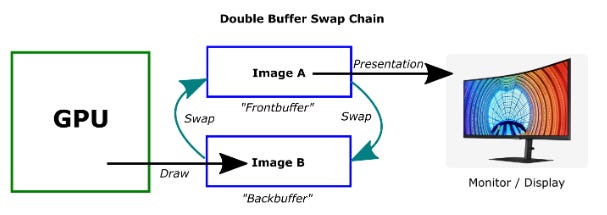

Let’s start off with a swap chain. Its simply a way the gpu manages the renderings and displaying of frames without causing tearing. The driver rotates through so frames can be presented smoothly. Each present flips/swaps which buffer is being displayed.

Game engines abstract this away from developers because the typical game dev doesn’t want to think about backbuffer rotation and frame pacing when they are placing foliage in a scene. Its just a hassle , so the engine handles it for you. But this also means good luck modifying it without altering the engine. Most people don’t want to think about it.

But I did want to think about it. Because at this point I was convinced that if I wanted a display simulation that carried energy over time, a memory of light that had hit the screen, the swap chain suddenly became something I couldn’t ignore. The lifetime of the buffers, when I present them, what I preserve from one to the next, what accumulates over time. That was all stuff I care a lot about. The setup in modern engines was aimed to do temporal anti-aliasing, motion blur, temporal upscalers, and the like. These are brilliant, but they are trying to stabilize an image with lots of detail and motion. This discovery left me at a crossroads.

Getting Out of Control

I want to be really clear, I’m grateful for Unreal and Unity. Blueprints were my first real exposure to “coding” as a 3D artist. Unity C# scripting made systems feel more approachable over time. Both engines let me ship games and learn how real production works. Without that decade in both, Retro Game Engine wouldn’t exist. So this is certainly not an argument that I’m too good for those engines. Instead, they allowed me to keep learning and growing to the point where I now understood core assumptions those engines were making AND potentially having an idea on a path forward.

Once I understood how the CRT was physically behaving, I couldn’t settle for convincing masks anymore. I’m human, so I obsess compulsively when I get an idea like this. Nobody else might care. Nobody else might want to use it. It might not even look good or work. Regardless, I found myself constantly thinking about this. Playing more Zelda and Metal Gear Solid. Brainstorming some more. It began to consume me. More research, more gaming. It was a snowball out of control down a hillside now, and it seemed inevitable I’d be attempting to make my own game engine soon.

The Advantages of Owning It All

Its really easy to point out all the cons to building your own engine. I could list the sheer amount of work, of learning, of fragility. Just sheer effort to get to something other engines do out of the box. You can probably think of several downsides right now without trying. So let’s focus on the advantages.

This is the part I’m proud of, because its something I didn’t even understand a year ago. Retro Game Engine owns the full frame lifecycle. I decide what the input signals are, what the display does with it, how time affects it, what gets presented and when. From input to output, I own it all. Its a wild feeling. Is it perfect? No. But its all mine and that becomes super liberating super fast, and it scales as the engine does. If I want something I can do it. I’m not negotiating with someone else’s assumptions about what it is I want to do. This level of control gives me the difference between having a CRT look versus a CRT behavior. I didn’t set out to achieve a CRT effect. Instead I studied and implemented each part of the physical CRT and the output you see is the result of that. Its not an artistic study, its a display simulation.

This also now empowers me (and hopefully others in the near future) to build a game that embraces that old relationship between limitation and creativity. Not by forcing a tool to bend that way, not on accident. But with intent from the core of it. And that is what I want to empower in myself and other devs. We shouldn’t be chasing accuracy and realism, we should be getting back to an era where games felt like they were meeting you halfway. Fewer options, but more identity.

Powering Off

Retro Game Engine isn’t here to replace Unreal or Unity. I’m not trying to compete with them. My engine isn’t trying to be everything at once. Just one focus, on brining back the magic of gaming from a bygone era. A relationship between signal and display.

But that focus opens up something interesting. It opens the possibility of giving a path where games can lean into limitation on purpose. Games that are less interested in chasing realism, and more interested in chasing identity. Maybe its only compelling to a handful of people like myself. I’d like to think there are a few indie devs out there who fell the same itch and don’t quite have the tool for it yet. I don’t know if others will be interested in making and playing those types of games again.

What I do know is that once I had gone down this road and understood what a CRT was actually doing, and studying how the games of that time were designed uniquely, I couldn’t go back to pretending filters were enough.

And that’s the reason Retro Game Engine exists.

I’d love to know what other aspects of building this game engine you’d like to hear me talk more about, let me know!

Great read! FWIW, it is possible to replace the entire tonemapper, it is sometimes done in NPR projects or when you really need something custom.